RISE (Robot à Interaction Sociale Ergonomique) was our Master 1 project supervised by Jean-Charles MARTY and Amélie Cordier. The project lasted throughout our first year of Master in Computer Science. We were a team of 5 students.

The objective was to improve the robot social interaction. More precisely the initial issue was :

Can a robot learn to play the tower of Hanoi just using its emotional skills ?

For this project we have chosen Cozmo, because it is one of the few having such a great emotional and animation basis.

Here is the video concerning the tower of Hanoi scenario

essAs you can see, Cozmo becomes more and more angry if it does the wrong action. If the user makes a lot of mistakes, the robot gives clues to help him. When the user does it right Cozmo becomes happier.

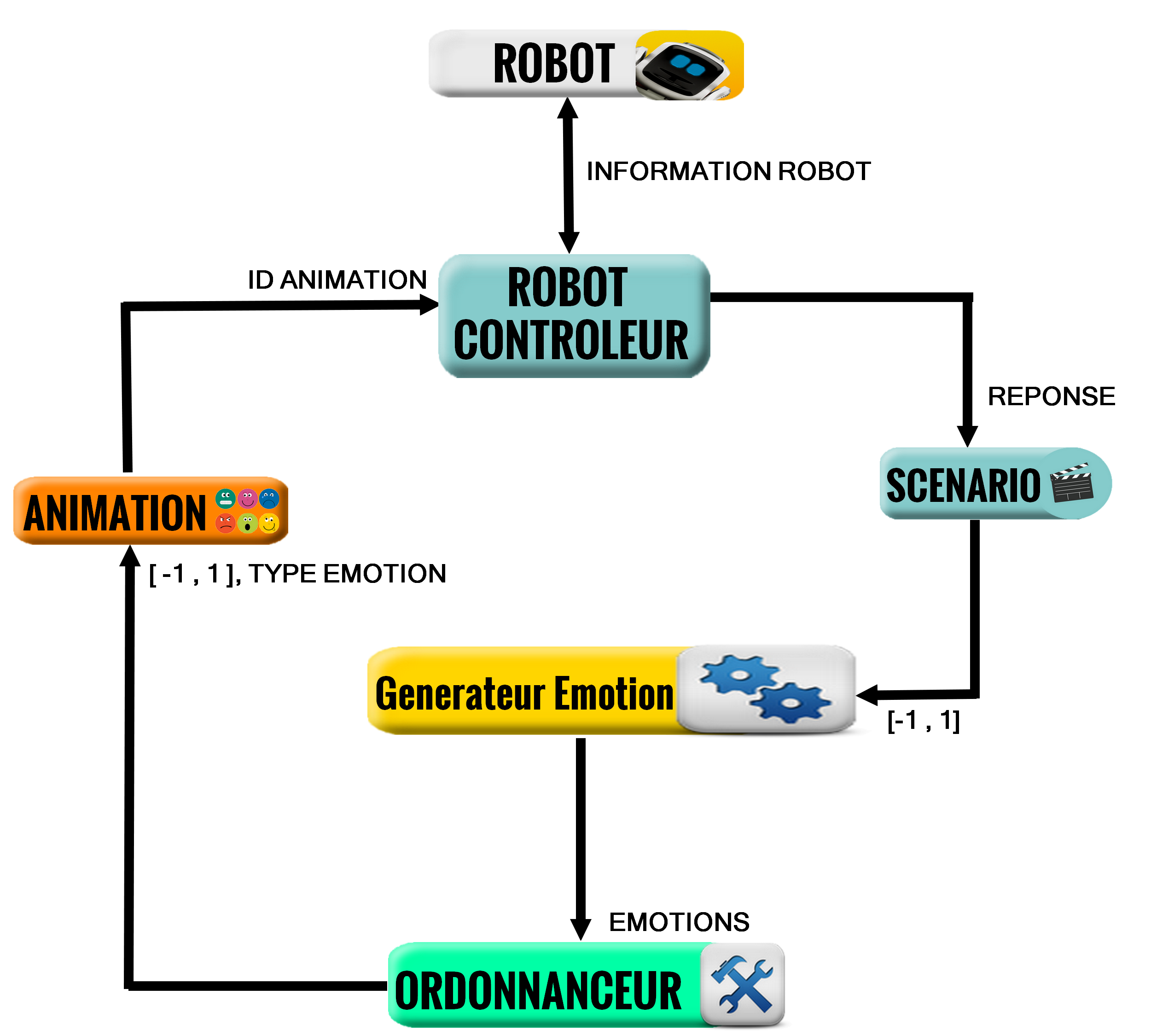

The robot architecture is made of 6 parts :

The robot environment

It corresponds to the amount of information the robot gets through its sensors (audio, video, etc) or a connected object (Cozmo cube).

The robot controller

It is used to control the robot, to play the animation or to obtain sensors values , etc.

The scenario

It is what the robot needs to do. In our video the scenario was to learn how to play Hanoi tower

The Emotional Generator (EG)

It is used to subscribe data for the environment (audio streaming, video streaming , etc). It gives back a value to define what is the robot emotional state. An EG represents one particular emotion. On the video there is one EG, happy and angry. It gets values from the scenario (the user does good or wrong action) and gets back a value between -1 and 1 (1 the robot is very happy, -1 the robot is not happy).

The scheduler

It gets all the EG output and selects the most important emotion to play. For example if you have a loud noise the surprise EG will have higher priority than Happy, angry GE.

The robot animation

It is used to get the scheduler output, and send the animation to play to the robot controller.

Here is the architecture used for this video :

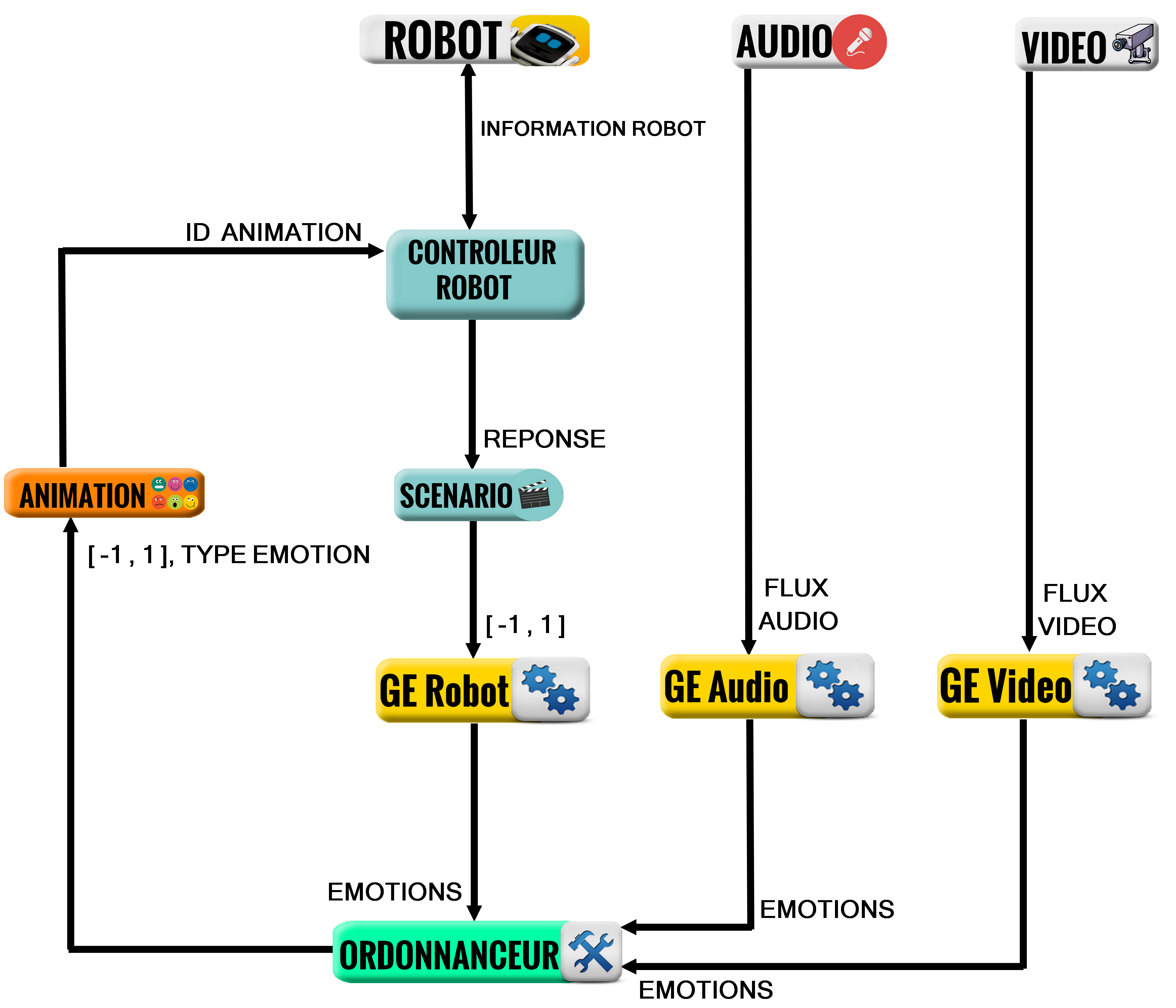

On the video we have just one EG. But our achitecture can get more than one EG. With diffrent type of envrironment value.

In the second part of the project, in order to prove that our architecture was generic, we decided with Amélie Cordier and his PHD Alix Gonnot to make another scenario. Cozmo had to regulate a meeting. Each participant had one tablet containing several possible feelings which would lead to an action :

- Someone talks too much

- I get bored

- I want to talk

- The subject is drifting

- No one is listening to me

- The meeting is not productive

Example 1 : if 3 members said « Bob talks too much ». Cozmo would go in front of him and make a very angry animation.

Example 2 : if you wanted to talk, the robot would go in front of you and make an animation to show that you want to talk.

For more information see the paper written by Alix Gonnot.